I don’t think the OP agrees with you on that front ![]()

Ok joke aside. We are talking about image formation (form an image from open domain/electromagnatic radiation data) and how people didn’t really want what they thought they want (which was why Chris asked people what they meant when they said they want ACES).

If you believe that’s what we are talking about, you didn’t get what I wrote then.

Filmic skews too! Therefore

This is not correct.

What we want is to raise awareness of the related matter, to make more people aware of the cons instead of blindly following the trend.

As to how Filmic skews, I think we have a perfect example:

Halloween Spider Demo File by Rahul Chaudhary

Now see the glowing face of the pumpkin, with Filmic, it looks yellow.

Now let’s look at TCAMv2:

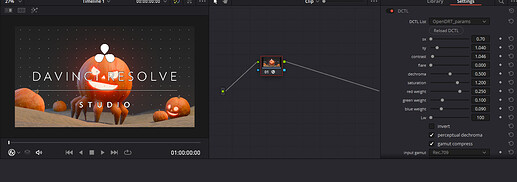

And let’s have a third party reference of OpenDRT (using the Resolve version due to its lack of OCIO version):

See? It’s supposed to be a redish orange color, but because Filmic skews too, it is skewing it to yellow.

To solve this problem, first people need to be aware of it, and we need more attention on this matter.

That sounds problematic to me. I believe every artist should care about their image’s formation and the result of their artistic work.

I agree. But when this comes you no longer need ACES to have

What he asked was: