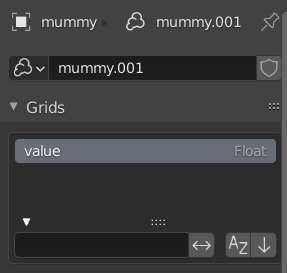

I’m trying to set up a scientific visualization of a volumetric dataset in VDB format that has a single field value, 32-bit floating point in the range [0,1].

Usually in sci-viz volume rendering you specify a transfer function that maps a value for a certain location (i.e. shading point) within the volume into a color and an opacity value. Sometimes such a transfer function combines the two, outputting both color and opacity, but in other cases you can also specify them separately, one function for color, one function for opacity.

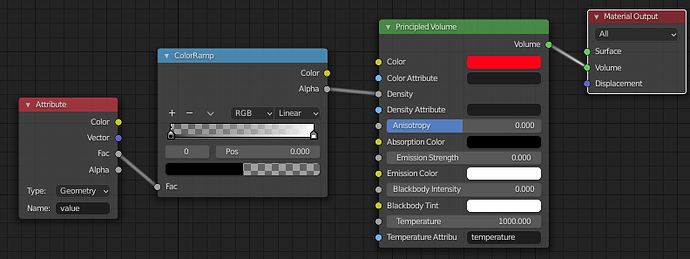

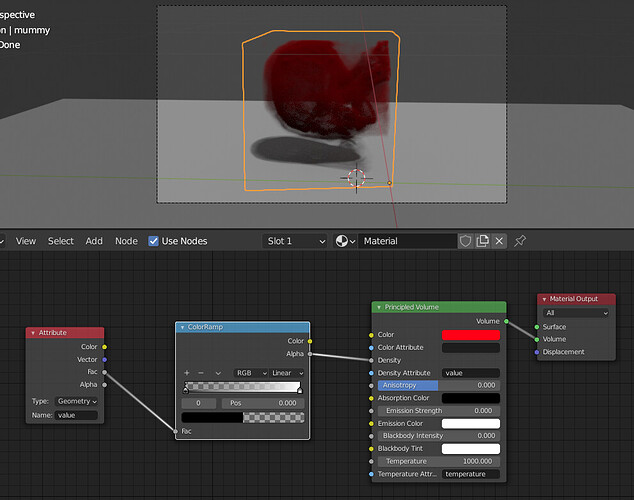

I see the Principled Volume node (in 2.92) has inputs for color and density, but I’m having a hard time setting up some kind of “transfer function” using (say) the ColorRamp node and the available input nodes. So I’m wondering whether the current volume object and rendering setup supports such general sci-viz style volume rendering, given that it seems to be aimed mostly at rendering clouds, smoke, fire, etc.

Specifically:

-

The

Attributenode, when set to typeGeometry, has a tooltip that says " within the volume", which sounds like it can provide volume-based information for a shading point. But setting theNamevalue to the volume attribute doesn’t seem to have any effect on the opacity during rendering in this node setup: -

Judging by the docs the

Volume Infonode seems to be specific to smoke domains, but does that mean it won’t work on general VDB volumes? And does it provide values for the complete domain, or is it likeGeometryin that gives values per shading point? I assume the latter. -

The

Geometrynode might be another candidate to use for defining a transfer function, as itsPositionoutput might be used to sample the volume (which could then be passed through a ColorRamp, etc), but I don’t see any volume sampler node. -

How does the

Densityinput versus theDensity Attributebehave (and similarly forColor)? The docs don’t really say much on the relation between the two. Does one override the other when set? Doing some tests with the possible combinations of connecting/setting suggests there is something more complex going on. -

Is the density value used in Blender directly mapped to opacity during rendering?

Thanks in advance for any feedback