Some previous writeups on the same topic:

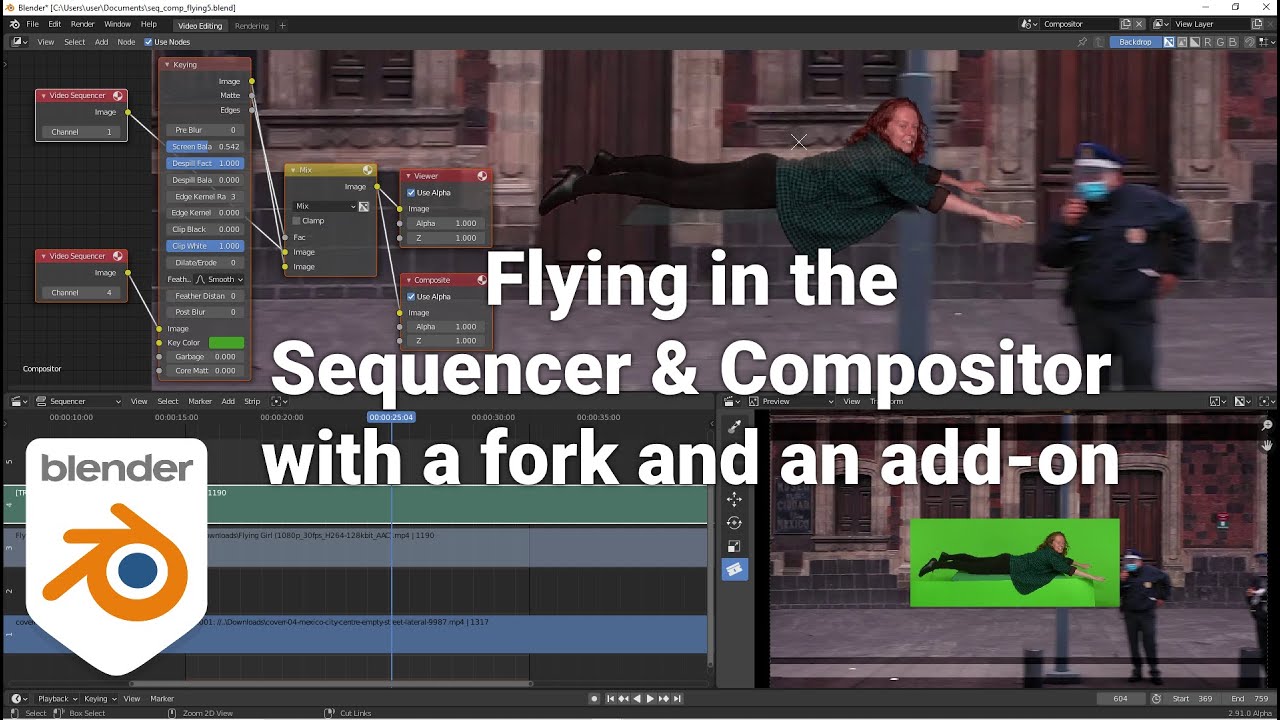

This fork added a sequencer node(which lets the user input a VSE channel into the compositor):

The main culprit in the current design of Blender is that the Sequencer is supposed to be at the end of the process and the sequencer data is stored inside scenes, which means that the sequencer can’t reference things from ex. the 3d view or the compositor which is located within the same scene. I’ve written about solutions for that here:

And here:

In the old days, it was still possible to assign ex. elements from the 3d view to the same scene sequencer, which led to this very much industry-standard workflow(in most game engines and 3d software):

When doing ex. GP storyboards it is really counterintuitive, that users will have to switch scenes in order to edit the contents of a drawing/strip.

And here on the overall vision for the VSE:

As you can see, have I tried to bang the drum for this stuff over many years, but unsuccessfully. It’s only during the Blender Studio productions, they realize the shortcomings of the VSE and throw in some hacks, but no BF dev care about developing a proper vision for the VSE.

![Previz Camera Tools [Add On] for Blender](https://devtalk.blender.org/uploads/default/original/3X/5/5/5528a1af07a0e95a5a57a5fddb64c0310783c164.jpeg)