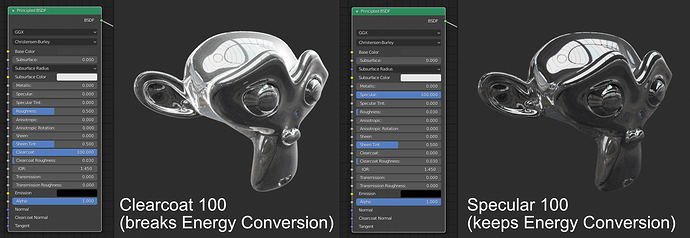

In the original Disney implementation, the clearcoat layer is just added ontop of the material without properly “mixing” it in by also substracting energy from the underlying shader.

“This layer, even though it has a largevisual impact, represents a relatively small amount of energy so we don’t subtract any energy from thebase layer.” (Physically-Based Shading at Disney 5.4 P16)

In their implementation, this is done for performance reasons. Is there a performance benefit for doing it in Cycles too though? The problem is, that the Principled BSDF allows pushing up the Clearcoat value to > 1.0, which makes it obvious that the Clearcoat specular is added instead of mixed and breaks energy conservation.

extreme example

I know you shouldn’t do that anyway, but in this case the value should be either clamped at 1.0 or mixed instead of being added to make the result less incorrect and keep energy conservation.

1 Like

Good question, clearcoat behavious should be exactly like a clear coat, but instead it’s behaviour is weird and for it to have a real visual impact you have to go over 1, which makes it incorrect, and in the end it does not behaves like a clear coat.

There may be a better solution to this.

Cheers!

Compatibility with other software is part of the reason, but we’ll likely give the user a choice at some point to choose accuracy or compatibility.

9 Likes

Sorry to intrude, but I’m afraid the problem is a bit more serious than that. The specular reflections from the basecoat are determined by the refractive indexes of the basecoat and the material the light comes from, so for a basecoat refractive index=1.5 and an air interface index =1 that works out at specular=((1.5-1)/(1.5+1))^2/0.08 = 0.5 from the formula in the Disney paper. BUT the clearcoat has a refractive index close to that of the basecoat and so the basecoat specular reflections more or less disappear: specular=((1.5-1.5)/(1.5+1.5))^2/0.08 = 0 . Think about wet concrete or varnish on wood: we deliberately put clearcoat on rough materials to get more saturated colours due to lower rough specular reflections. I think Disney confused the small remaining basecoat reflection with the large new clearcoat reflection and thought the clearcoat made only a small difference.

If you take into account that in Blender specular 0.5 = clearcoat 4 (as per Disney) then it’s possible to build some nodes to set the correct values for each using the refractive index formulae. It all works and it’s possible, for example, to make a great wood texture and then automatically generate a great varnished wood texture “for free”. Or have a dog wee on the pavement. And not break any laws of physics into the bargain! However this should really be built into the shader so that when the user applies clearcoat the internally used basecoat specular automatically changes.

Google seem to have picked up on this and it is well explained here.

Sorry for the rant, used to be a physicist once

6 Likes

Can u elaborate more but in simpler way? I am little confused. So what is the recommendation to fix the clearcoat? which value should we multiply?

Just revisited this issue with Blender 3.0/cycles X without GPU acceleration. Either I didn’t do the testing right 2 years ago or something has changed.

Clearcoat does not now render in a similar way to specular, no matter what values I use. Clearcoat highlights seem to have about 10 times lower peak brightness than specular highlights and the the highlight is much softer/larger for a given roughness value.

You should decrease the specular input to the principled BSDF when you increase the clearcoat value, and vice versa, but I no longer know how to do this “scientifically”. Moreover after a couple of years messing with skin shaders I’ve realised that reality is not that simple; often you are simulating a mixture of materials at the surface at a microscopic scale so the physics of layering refractive indices does not really apply.

I’m officially baffled. I understand the priority now is to standardise the behaviour of PBR shaders but it would be very useful to know how the cycles X implementation works without trying to understand the code. Is there a paper somewhere?

3 Likes