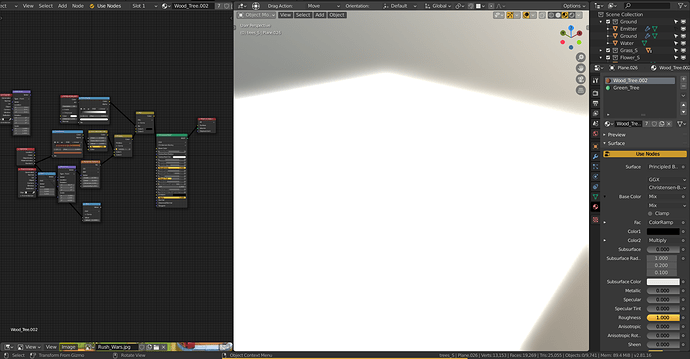

When EEVEE gets done compiling shaders where does it save them? I noticed I can render in EEVEE close and open the blend without saving and I dont have to recompile the shaders again, Im looking in to building an EEVEE render farm im not sure what the most optimal way to render EEVEE with CLI is atm.

It’s the graphics driver that has a shader cache, not Blender. The location of the shader cache depends on the platform and driver.

Well thats convenient. So if I render in EEVEE with CLI the 2nd frame should be faster even if blender restarts?

Possibly, given the decision to support caching rests with gpu driver so it may or may not cache, any modern driver should support it, but there’s no way to guarantee it.

ATM the plan is to have 4 GPUs and 4VMs per machine with pci passthrough so we can run eevee jobs in parallel. Is it possible at all to tell eevee to use a different Opengl device? that would simplify things quite a bit. Would be pretty amazing to have eevee render a frame per GPU that you have in your machine

LordOdin,

It is possible to run multiple instances of Blender with a different OpenGL device. In Windows 10 right click on “blender.exe” their should be a menu option “Render OpenGL on” a list of their different OpenGL devices appear. Just select different graphics device for each instance of Blender. I have done Eevee animations with two RTX 2070 one instance doing the odd frames the other doing the even frames. It reduce the animations times almost in half.

Oh that is good to know! I wonder if its possible to launch blender with a command to enable that forcefully

It is possible to force a blender.exe to an openGL device. Create multiple copies of the “blender.exe” and rename to e.g. “blender2.exe”…“blender4.exe”.

Open Nvidia Control panel under “Manage 3D settings->Program settings”. Now add each different “blenderX.exe” program. Change the “OpenGL rendering GPU” settings and pick different graphics device for each blender executable.

Well that seems like a mess but sounds like it would work. I was thinking of a more scalable approach though one might not exist

It’s gonna be tricky, there is an opengl extention to select the card for opengl but for nvidia it is only supported on the quadro cards, there is an alternative api that the nvidia control panel is using, but that SDK is not compatible with our license currently.

So it sounds like it would be even harder to do on AMD cards

The possible problem that may arise with this is the use of RAM doubles with each instance.

Well if you have multiple cards chances are you have a pretty beefy machine. For instance I have 32 threads 64GB of ram and 3+ GPUs at any point in time

Hello, devs! Could you please to help: how can I force recompile shaders? Sometimes materials completely won’t to updates. I struggled with this few times and in this situations helps install new build of blender. But may be there is much more esthetic way some console command or something?

Hi @viadvena,

Blender does not cache any compiled shaders. It is the OpenGL driver that facilitates this feature if supported.

Jeroen