Hey everyone! We have been using Cycles X on a project, and I just noticed that some of the denoising passes have dissapeared from the render layers, namely the Denoising Depth pass. I am wondering if this was removed with the removal of NLM. While we use OIDN for denoising, the denoising depth pass was very useful for our compositing atmosphere/mist/fog setups, because it was antialiased and worked great with transparent/glass/refractive materials in combination with the final denoised image.

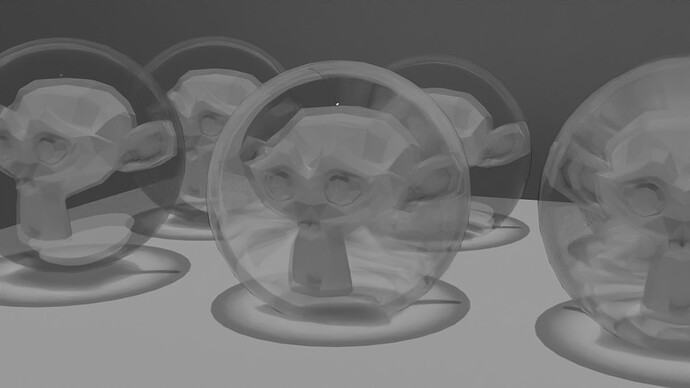

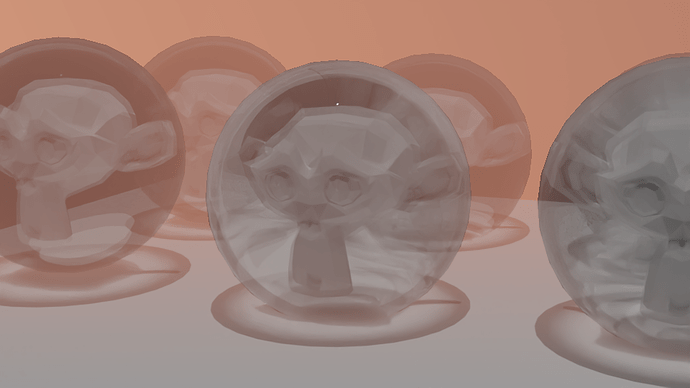

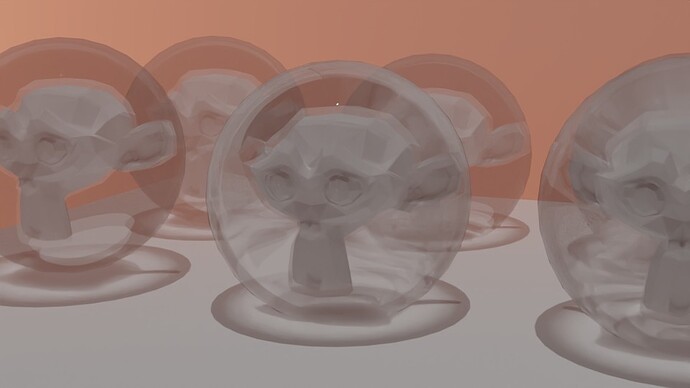

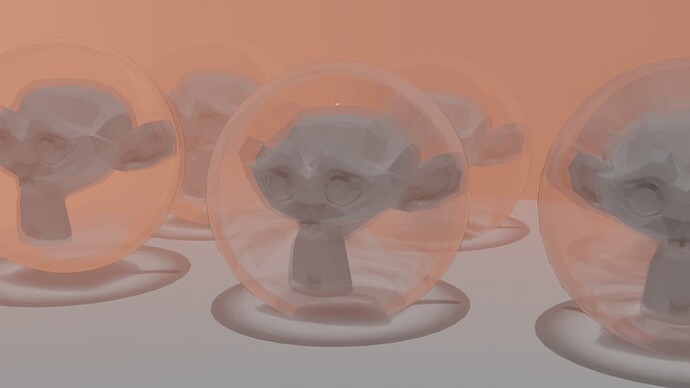

Here is an example with a scene of Suzannes with Glass Spheres:

The final render:

The glass spheres’ material is a simple glass shader mixed with a transparency node.

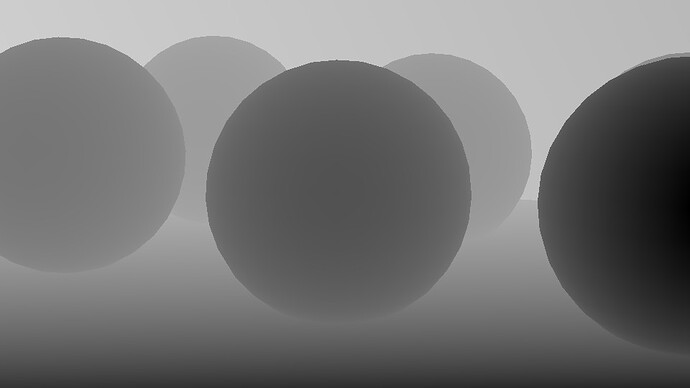

Here is what the normalized depth pass looks like:

As expected, it doesn’t take into account transparency or refraction

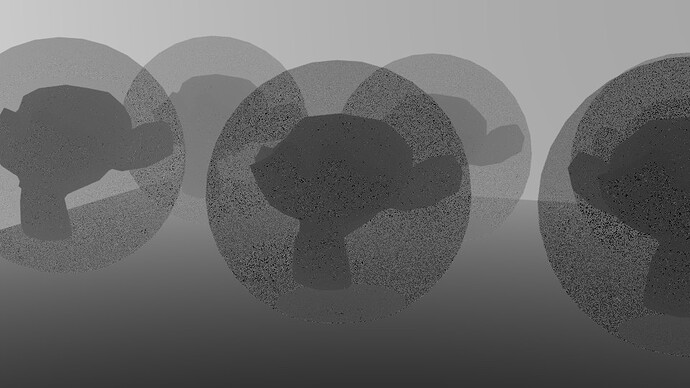

Here is what the mist pass looks like:

It takes into account transparency, but not refraction, which can cause problems when compositing the mist effect on the final image. It is also noisy, but can be denoised:

It looks better, takes into account transparency, but still no refraction.

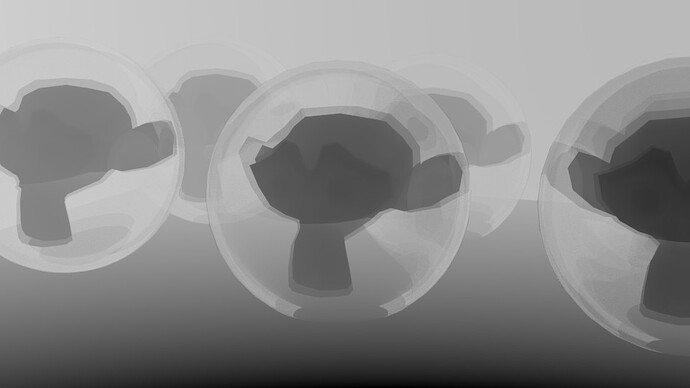

Here is the fabled denoising depth pass(normalized):

It is by far the cleanest one of the bunch, is anti-aliased, and most importantly, not only does it take into account transparency, but refraction as well. This is incredibly important for compositing, as the mist/fog effect applied in the background is refracted accurately on the glass object, and is composited in. Here is what I mean.

Here is a fog effect applied using the normalized depth pass:

The front spheres have no transparency information, and as such do not handle the colored fog accurately.

Here is a fog effect applied using the denoised mist pass:

While this one handles tranparency better, the refraction inside the ball is ignored and causes colored halos, and the refracted mist does not have its accurate color.

And finally, here is a fog effect applied using the denoising depth pass:

While at first glance the effect seems strong, it is actually the most “realistic” or desired of the bunch: transparency is taken into account, and the mist in the background is accurately refracted in the glass. All our fog compositing relied on the denoising depth pass, and now that it’s gone it is proving incredibly hard to accurately and easy composite fog on scenes having a lot of glass and refractive/transparent materials.

TL/DR:

The denoising depth pass was incredibly useful, can we have it back? And if not, is there any viable alternative to it right now or a node set up to simulate it?

Thank you for all your incredible work on Cycles!