There’s no need for a custom build for Optix on GTX cards anymore.

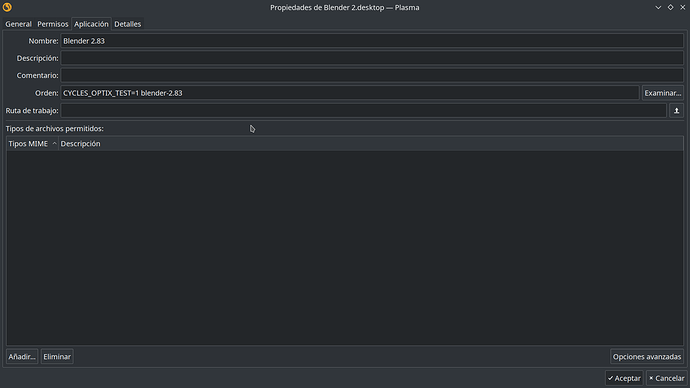

Cycles/Optix: Add CYCLES_OPTIX_TEST override

https://developer.blender.org/rB58ea0d93f193adf84162d736c3c69500584e1aef

Tested with Nvidia 750Ti, viewport denoiser and render with Optix works, between 10 to 20 seconds more faster depending on the scene i try.