I’ll share my personal view and understanding on this topic here. In summary, I do not see any good reason to prevent using dynamic_cast in most of the code. It’s a cheap, easy, safe way to add some type-safety checks. The only case where it can become a problem is when millions of castings need to be performed per second.

The terrible performances of static_cast in debug build context (when the Undefined Behavior Sanitizer is enabled) would also be a reason to prefer dynamic_cast in most cases to me (although admittedly, the same reasoning applies to this case as to the usage of dynamic_cast in release builds - the overhead should not matter anyway in ‘common’ cases).

Bad Design

I find this point fairly moot and irrelevant. This usually applies to designs where an abstract base type is implemented by one or more sub-types, in some form of ‘interface’ paradigm. I think that the ‘bad design’ here is not the need to use dynamic_cast, but the need to down-cast in general.

RTTI

Code using dynamic_cast also typically has its own handling of type info to decide to which sub-type to cast a base pointer. As such, I see dynamic_cast as a simple, fairly efficient and safe way to double-check and validate the correctness of the ‘type management’ performed by the code.

Even if there is no validation of the cast pointer, dynamic_cast will create an immediate crash situation, fairly clear and easy to understand. On the other hand, static_cast will give ‘something’ that may or may not crash, immediately or ten function calls later, etc.

Performances

Dynamic (down) casting does require some additional operations on the CPU. This cost depends on the complexity and deepness of the type hierarchy. On modern hardware and compiler, a dynamic_cast typically takes a few nanoseconds to a few tens of nanoseconds.

While most benchmark available online are fairly old (10 years or more), this one is more recent (alas it does not seem to specify the compiler used).

I also ran a small experiment with the (relatively simple) new uiItem type hierarchy from PR 124405 on my machine (Ryzen 9 5950X, Debian testing, clang 16).

Results with a release build are in the 5 nanoseconds area per dynamic_cast on average (and virtually 0 nanoseconds for static_cast as expected).

A debug build (with undefined behavior sanitizer) gives… interesting results: dynamic_cast is about 20 nanosecond on average, and static_cast is 3600 nanoseconds! With a few runtime error: reports about invalid down-casting.

As a conclusion regarding performances, while it should be avoided in critical areas of the code (where there would be millions of castings per seconds), dynamic_cast typically does not have a significant impact on performances. In debugging context, when using an undefined behavior sanitizer, it can actually be several orders of magnitude more efficient than a static_cast.

Alternative Approaches

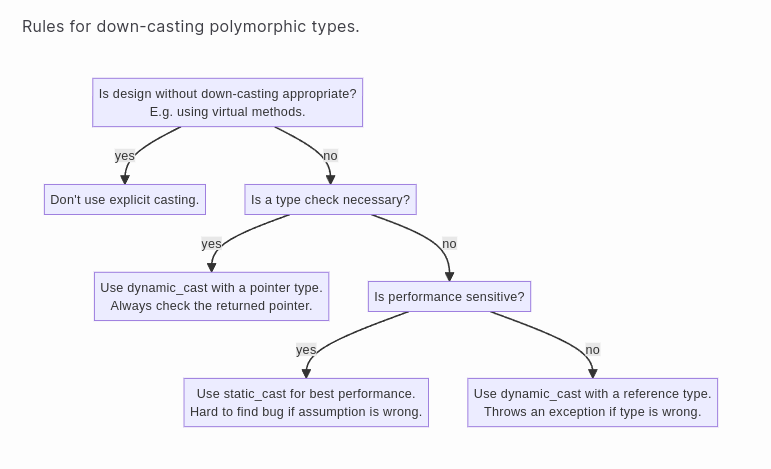

While using static_cast and asserting that its result matches dynamic_cast is likely a good thing to do in performance critical areas, I am not convinced the added verbosity (and lesser bug-discoverability) is worth it. Not to mention the consequences of using an UBSAN build on static_cast.

The virtual methods approach offers the same level of type-safety than dynamic_cast, but with better performances (about twice as fast in simple cases, and performances remain relatively constant when the type hierarchy complexity grows). However, it adds a lot of clutter to the code, and requires way more maintenance from the dev team, especially as the type hierarchy gets more complex.

I am even tempted to consider that, if using dynamic_cast becomes a problem and implementing virtual casting methods becomes a solution, then it is a good sign that the current polymorphism-based design is a wrong choice.