Cycles will support running on this GPU, we just need to find a bit of time for it. For RTX hardware raytracing support I can’t promise any specific plans, but we would like to have it.

The Optix denoiser running on realtime on renderman is really something amazing…

No more noise even when interacting with the viewport (eventually).

Hopefully this will come to cycles someday.

Nvidia added the optix shared libs to the driver, sadly they did not include the denoiser, so can’t really use it in blender as of right now due to the required closed source binary redistributable

well… we can use it in form of addon AFAIK, Remington Graphics is doing it already

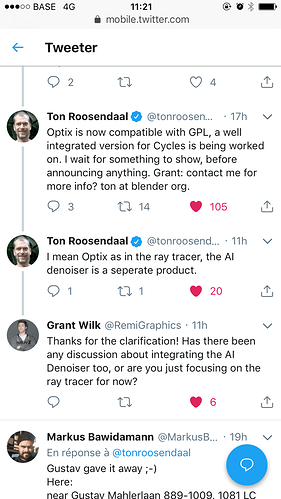

Guys does " well integrated version of optix for cycles" means a deep support for rtx cards and their rt cores ? Or this is two separate things ?

It seems so…

From Nvidia´s website:

Developers can access NVIDIA RTX ray tracing through the NVIDIA OptiX application programming interface, through Microsoft’s DirectX Raytracing API (DXR) and Vulkan, the new generation, cross-platform graphics standard from Khronos Group.

And another quote from ther site:

The OptiX API is an application framework that leverages RTX Technology to achieve optimal ray tracing performance on the GPU

If cycles get optix ray tracing In the official branch of blender I think that the AI optix denoizer from Grant will be part of a main branch too

We can reasonably use the OptiX raytracing API because it’s part of the graphics driver similar to the OpenGL, for which the GPL has an exception. The Optix denoiser however is unlikely to be ever bundled with Blender, due to GPL incompatibility.

Nope, they are working in the Raytracing Acceleration, not the AI Denoiser, and as LazyDodo said, if it´s not GPL compatible it would be available as Addon, but that´s it.

Cheers.

same for a potential real time ray tracing for eevee ?

Yes, it’s the same licensing situation for EEVEE.

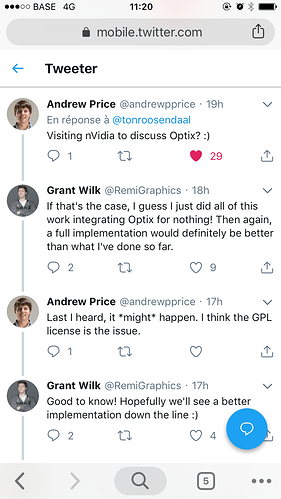

ton just tweet otherwise

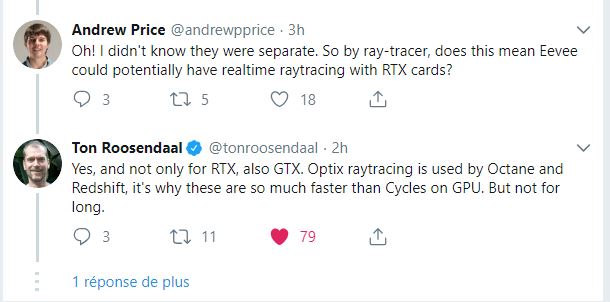

redshift is faster because it’s a bias renderer and have his unified sampling method etc,

when Ton say " an integrated version for cycles is being worked on " by who it’s being worked on ?

One of the reasons is that, and another is that it uses an Optix acceleration, Octane is faster while it´s similar to cycles, and it seems one of the reasons is that one, is not the only one.

Regarding bias, you can do plenty of Bias in cycles to accelerate your renders, with simplify for example, not the same as redshift but if you use it wisely it can help you accelerate the renders A LOT.

Cheers!

i am sorry but seams ton have no idea what he is talking about. Redshift dosn’t run on OPTIX. it runs on cuda. and octane denoiser is also not optix based but their own implementation.

You may be right, but just to be sure I´m asking about this to RedShift crew

And reason why cycles is slow compared to octane or redshift is because. Ton(might be wrong) killed all usefull accelerateing features like adaptive sampling or Gi cache. etc technics. Brute force or photon mapping. there was path for it it was working and speedup was huge and it was long time ago. but Ton said if i remember that he dosn’t want to have any computations apart from bvh before rendering. so it went to trash.

What? Nobody “killed off” any features, especially not Ton.

The adaptive sampling patch was abandoned because it doesn’t work well and adaptive sampling is extremely tricky to get right, but it remains on the roadmap.

As for caching methods, renderers in general are moving away from that because nowadays regular PT simply is better - even the guys from Corona, which is known for having remarkably good caching, said the same at Siggraph. There is some promise in algorithms that are only used to guide sampling distributions and are trained during rendering instead of in a prepass, but I can tell you from experience that implementing something like that is extremely tricky as well.

Photon mapping has a huge range of problems, nobody uses it anymore for good reasons.

i am sorry if i don’t remember stuff right but in my had was that the trigger was pulled by ton but that was like some time ago so i might be wrong. but Adaptive sampling yes it’s tricky… but instead of working on it and get it right we droped it. and it was at really nice condition at the time. i used it on many commercial jobs.

About caching everyone says they are moving away. but everyone is useing it… PM yes it is old etc was just example.

But my problem is… if we keeped cycles so simple… why don’t we support RTX. We technicly should be first. right? i know that there is focus on 2.8 getting it done but… for me the logic behind some decisions is just really wird.