Here’s what I think I know so far. Please correct me where I’m wrong.

In Blender 4.0, curves are uploaded to the render device as points and the geometry is generated there. Normals for curves are calculated for intersections in curve_intersect.h

f (!(sd->type & PRIMITIVE_MOTION)) {

P_curve[0] = kernel_data_fetch(curve_keys, ka);

P_curve[1] = kernel_data_fetch(curve_keys, k0);

P_curve[2] = kernel_data_fetch(curve_keys, k1);

P_curve[3] = kernel_data_fetch(curve_keys, kb);

}

else {

motion_curve_keys(kg, sd->object, sd->prim, sd->time, ka, k0, k1, kb, P_curve);

}

P = P + D * t;

const float4 dPdu4 = catmull_rom_basis_derivative(P_curve, sd->u);

const float3 dPdu = float4_to_float3(dPdu4);

if (sd->type & PRIMITIVE_CURVE_RIBBON) {

/* Rounded smooth normals for ribbons, to approximate thick curve shape. */

const float3 tangent = normalize(dPdu);

const float3 bitangent = normalize(cross(tangent, -D));

const float sine = sd->v;

const float cosine = cos_from_sin(sine);

// This is the important line!

sd->N = normalize(sine * bitangent - cosine * normalize(cross(tangent, bitangent)));

The sine * bitangent - cosine component is what generates the actual rounded normals, I think.

Further down in the same file:

sd->Ng = (sd->type & PRIMITIVE_CURVE_RIBBON) ? sd->wi : sd->N;

I’ve tried various combinations of commenting out the sd->N calculation, forcing sd->Ng = sd->N, and sd->N = normalize ( … ) and none of them look quite right.

Then I fetched the old Blender 2.83 source code and if you squint at geom_curve_intersect.h you can find an early version of what is now a much more compact curve_intersect.h, as well as its code to calculate the normal:

if (sd->type & PRIMITIVE_CURVE) {

P_curve[0] = kernel_tex_fetch(__curve_keys, ka);

P_curve[1] = kernel_tex_fetch(__curve_keys, k0);

P_curve[2] = kernel_tex_fetch(__curve_keys, k1);

P_curve[3] = kernel_tex_fetch(__curve_keys, kb);

}

else {

motion_cardinal_curve_keys(kg, sd->object, sd->prim, sd->time, ka, k0, k1, kb, P_curve);

}

float3 p[4];

p[0] = float4_to_float3(P_curve[0]);

p[1] = float4_to_float3(P_curve[1]);

p[2] = float4_to_float3(P_curve[2]);

p[3] = float4_to_float3(P_curve[3]);

P = P + D * t;

tg = normalize(curvetangent(isect->u, p[0], p[1], p[2], p[3]));

if (kernel_data.curve.curveflags & CURVE_KN_RIBBONS) {

sd->Ng = normalize(-(D - tg * (dot(tg, D))));

}

sd->N = sd->Ng;

… so I tried implementing that myself:

if (sd->type & PRIMITIVE_CURVE_RIBBON) {

/* Rounded smooth normals for ribbons, to approximate thick curve shape. */

const float3 tangent = normalize(dPdu);

const float3 bitangent = normalize(cross(tangent, -D));

const float sine = sd->v;

const float cosine = cos_from_sin(sine);

// new code

sd->N = normalize(-( D - tangent * (dot(tangent, D))));

… and even swapped the assignment of N and Ng like in the 2.83 source code

Spoiler alert, it didn’t work.

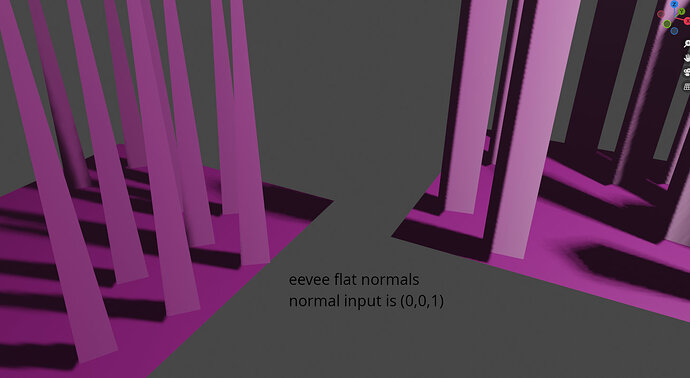

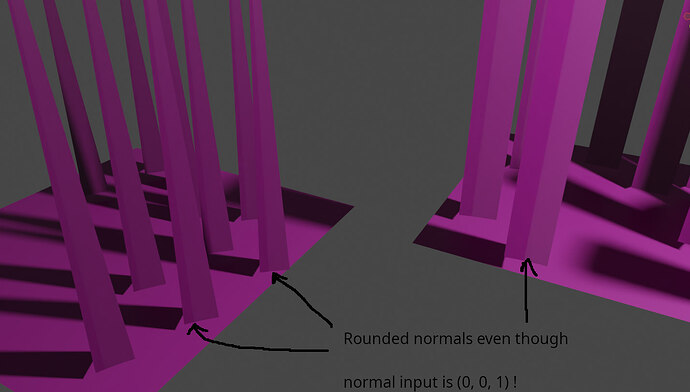

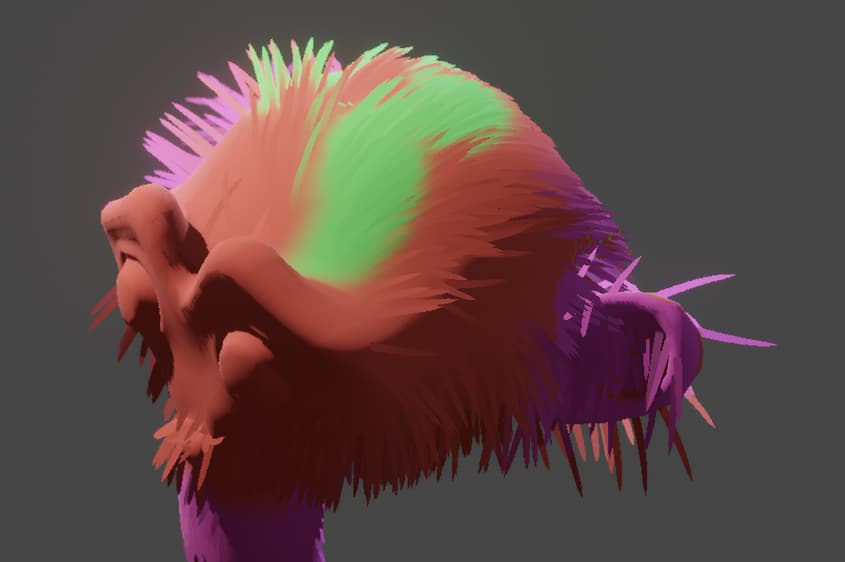

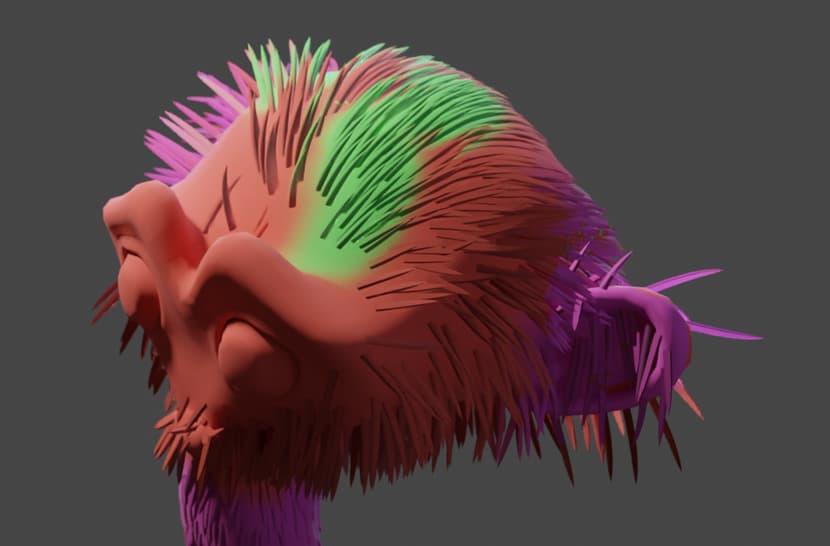

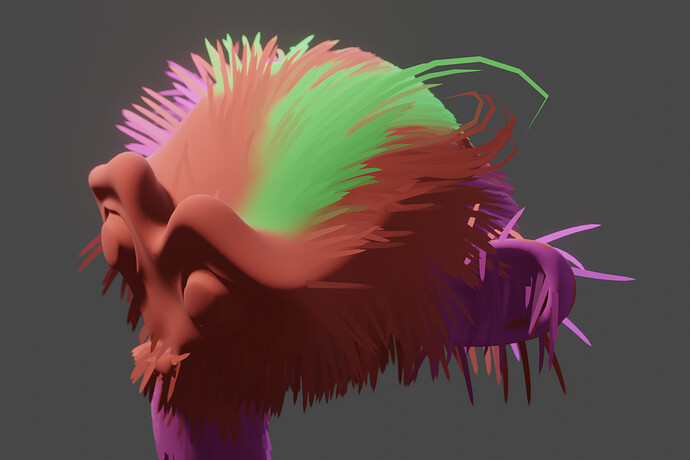

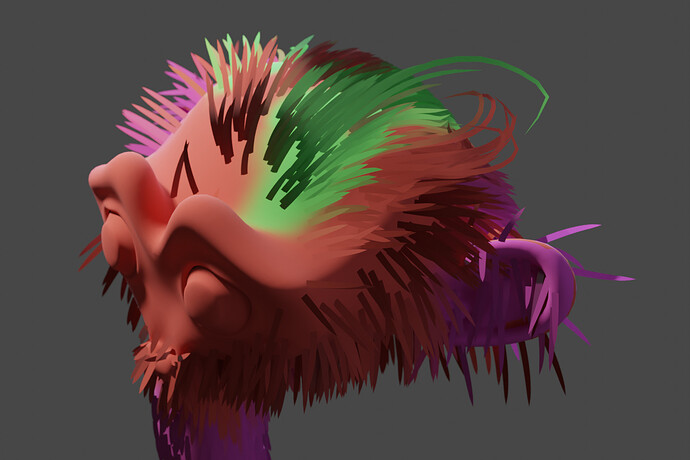

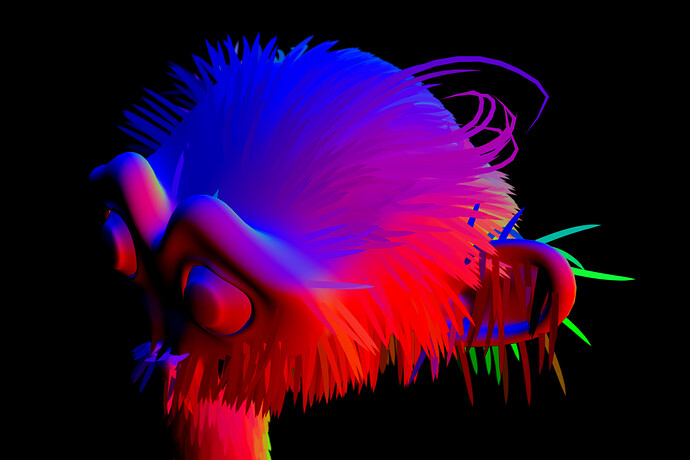

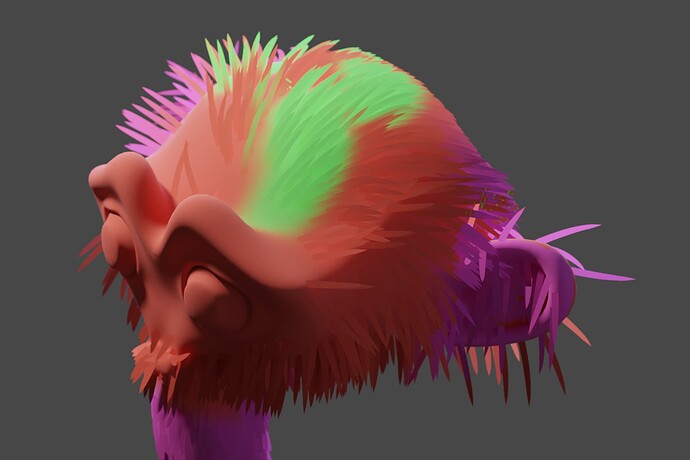

[ Screenshots here in a bit, my build is still compiling ]

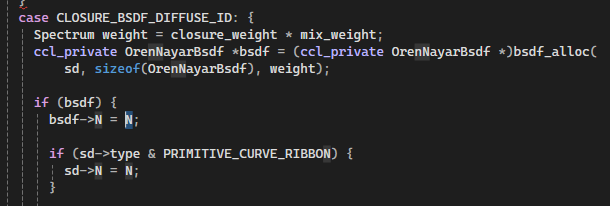

Then I noticed in closure/bsdf.h, the normal is also being overriden:

/* For curves use the smooth normal, particularly for ribbons the geometric

* normal gives too much darkening otherwise. */

*eval = zero_spectrum();

*pdf = 0.f;

int label = LABEL_NONE;

const float3 Ng = (sd->type & PRIMITIVE_CURVE) ? sc->N : sd->Ng;

I’ve gotten some interesting results by setting Ng = sd->Ng, but I still get some hair strands that are randomly dark. Now where I’m stumped (I think) is on backface handling. Blender 2.83’s source code has some explicit references to backfacing curve primitives, and does Scary Math Stuff in response, again in geom_curve_intersect.h. And I think what I’m seeing is the “backside” (relative to the light source) of the hair strand at certain angles simply not receiving any light, as opposed to in Eevee where the face is lit the same on both sides.

So to sum up, I’ve got a lot of questions:

-

The normal-related code I’ve found is in two spots: ray intersection and shader closure sampling. Is there anywhere else I should be looking?

-

sd->Ng and sd->N are true normal (geometry) and normal, as far as I can tell. We also have sc->N in bsdf.h which I’m not sure if it’s a typo or not. What is the source of all of these? Which one comes from the shader? The reason I’m not sure if it’s a typo is because I was assuming that the normal set in the shader (closure) is the custom vector I’m trying to set, but they all seem to interact in weird ways. Setting a custom normal in the shader in stock Cycles does produce a change! … but it seems to get “overlaid” with the default rounded normal. It’s very weird and I don’t think I’m explaining it right.

-

Is there something about raytracing that prevents the “same on both sides” effect I’m trying to get? I’ve been able to make an awful shader node that dynamically flips the normal on the “far side from camera” of a face, assuming it’s backfacing. Which in theory should help, and works as expectedish on a single plane, but I don’t think curves have any such notion of a rear-facing “far side” surface.

Thanks for reading my word salad. I know just enough about rasterizers, raytracers, and blender code structure to be dangerous.