How UV Unwrap works, and way to implement it in a opengl generic application. Please help me.

There is some information on the system here, with most code now in source/blender/editors/uvedit/:

https://archive.blender.org/wiki/index.php/Dev:Source/Textures/UV/Unwrapping/

some way to implement the UV Unwrap algorithm in a C ++ application, or at least explain a bit to what operations it does to meshes, if it processes vertices, faces to calculate texture coordinates

You’ll have to look into it a bit more yourself and ask more specific questions, we can’t provide a step by step guide. This code is part of Blender and can’t just be copy pasted into another application, work will be needed to reuse it. And note that the code is GPL licensed, which may or may not be compatible with your application.

UV unwrapping computes UV texture coordinates based on vertices and faces.

Thanks you. For your help. I already had found the algorithm for generate uv. It’s Least Shape Conformal Mapping. I’ll try to port the algorithm from C++ to Java.

Hi, the original LSCM algorithm only works for triangulation (if I understand it correctly), how does Blender make it work for quadrangulation? I looked at uvedit_parametrizer.c but didn’t find any related code. Or does the LSCM in Blender also only for triangulation, but the common edge of the two triangles of each quad is removed for visualization?

how does Blender make it work for quadrangulation?

TL;DR: By triangulating polygons with a number of sides > 3.

Rainy Day Reading: Necessarily so, because - triangles excepted - polygon vertices are not guaranteed to be co-planar. Consequently a single mapping of the polygon from three-space to the texture plane cannot be generally established. A

triangle, so long as its vertices are not co-linear, defines exactly one plane, so a distinct transform from the three-space triangle to a projected surface image can be found, given one of any number of projection schemes. By ‘projection scheme’, I mean some procedure of choosing scalings, shearings and rotations of the plane so that the 3-space triangle is a flattened into a two dimensional image. There are any number of schemes; Least Squares Conformal Mapping is one. They all work best with the unambiguous input of distinct planes, with particular orientations in three-space, so as a pre-processing step for all these schemes, polygons with more than three vertices are segmented into distinct triangles.

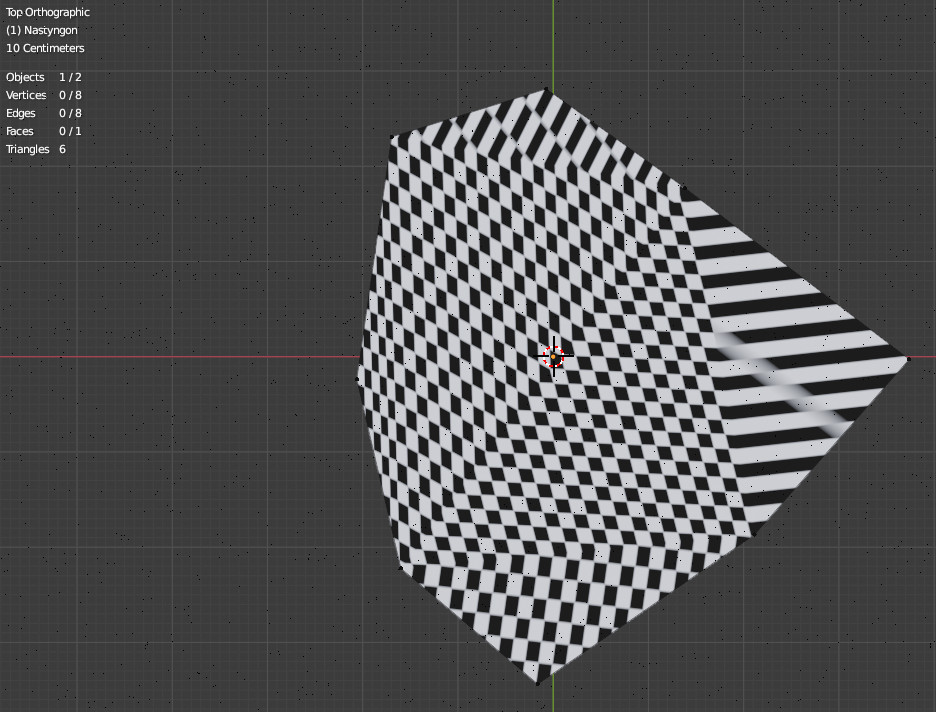

The effects of this triangulation can be seen in Blender with ‘nasty’ ngons, their vertices scattered randomly through three-space. Blender triangulated this

eight-vertex polygon into six triangles and found mapping transforms

for each, following triangulation. Texturing the polygon with a checkerboard makes distinct each of the six mappings, each one scaling, shearing and rotating the checkerboard pattern in a particular way (though the two leftmost triangles have very nearly identical transforms).

Hope this helps. Welcome to the board.

I see, thank you. What’s the difference between ‘angle_based’ unwrap and ‘conformal’ unwrap, bpy.ops.uv.unwrap ( method=‘ANGLE_BASED’ ) vs bpy.ops.uv.unwrap ( method=‘CONFORMAL’ ) ?

Conformal = LSCM (Least Squares Conformap Map)

Angle Based = ABF (Angle Based Flattening)

In more practical terms – I’ve found that Angle-Based is better when you need symmetrical UVs or UV’s for organic objects. Conformal seems better for hard-surface objects with straight surfaces. You can sometimes get good results by using a combination of the two on different parts of a model and stitching the UV’s together. That’s based on my experience so of course your mileage may vary! The default is Angle-Based.