Grease Pencil and Geometry Nodes Integration

As part of the upcoming Grease Pencil 3.0 project Grease Pencil will use a new curve data-type representation (the one used for the new Hair in Blender) and will support Geometry Nodes.

This design proposal explires how to integrate both systems. There are two main lines of thought considered:

- Implicit conversion

- Explicit conversion

The implicit conversion means you could connect a Grease Pencil geometry directly into a Curve node. The explicit conversion mimics the internal data representation. That means users are more conscious of performance implications and have full control over it.

Common design solutions

Both scenarios have some common elements.

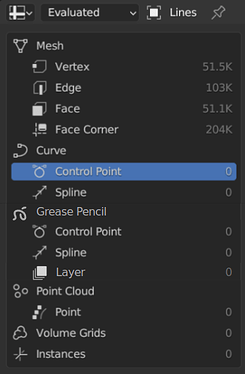

We will introduce Grease Pencil as a new geometry type in the Spreadsheet with the control points and spline (similar to curves), but also with the layers.

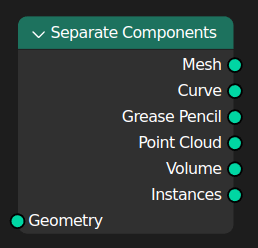

We also show Grease Pencil in the Separate Components node in the same order as of the Spreadsheet:

Layers

Layers are an important element to control the Grease Pencil. The details are not clear yet, but we will probably need them as a new data-type (socket), as a way to restrict Geometry Nodes operations.

We also need to make sure that a node-group can be re-used for different Grease Pencil objects. That means that layers should be (most of the time) definable in the modifier level as Named Attributes.

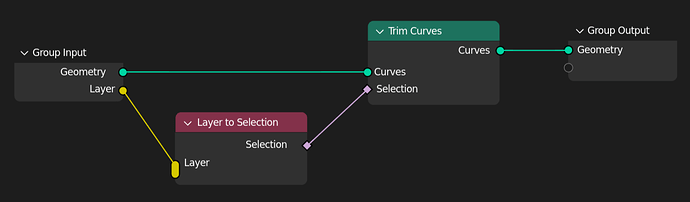

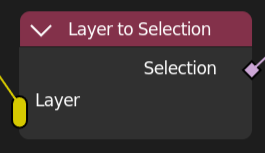

A Layer to Selection node could even allow multiple-input of layers. Note that in this design Layers are treated as data (circle socket). But they may need to be fields (diamond socket).

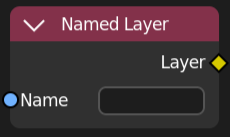

Although layers will be defined in the modifier level, at some points in the workflow (or for advanced use-cases) the option to define a layer inside the node-tree is desirable. Therefore a Named Layer can work similar to the Named Attribute node.

Implicit conversion

The idea is to allow for Grease Pencil geometry to be used directly in any Curve-compatible node (trim, fillet, resample, revert, …). This is similar to how both Mesh and Curve geometries can be used in Point nodes (e.g., Points to Volume).

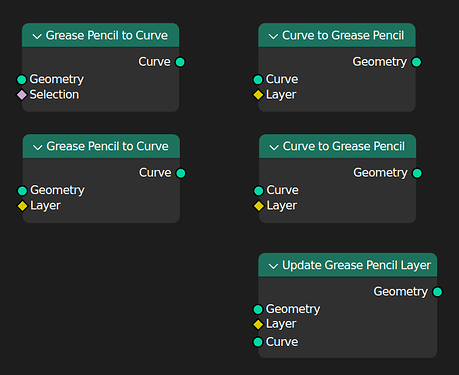

Explicit conversion

The other approach would be to have nodes to convert from/to Grease Pencil and curves.

It is not clear to me whether those would be a Curve to Grease Pencil (that could then be joined with the other geometry types) or an update of exiting Grease Layers.

Here are a few ideas around that:

Final thoughts

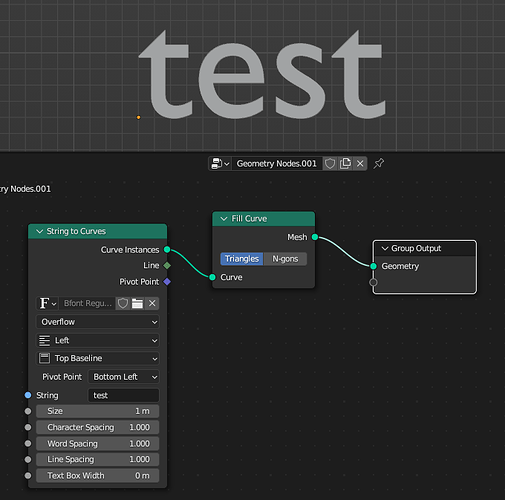

The best would be to try to replicate the exiting Grease Pencil modifiers with Geometry Nodes. That would give a better view of what else may be needed, or should be considered.

But at the core of it I believe most of the problems can be boiled down to the simple case. Anyone that wants to explore more complex scenarios please share the results of your considerations here.

I also need the rest of the Geometry Nodes team to assess whether there is a performance impact between the solutions. One of the appeals of the new Grease Pencil design is to be more robust and performance efficient.