Hi @brecht @LazyDodo or anyone who can point me into the right direction.

If u have a displacement node connected to the displacement input of the material output node, how would be the best approach to get this data as a mesh?

It´s rendering in the viewport, right?

So how can I access that data and can you please point me to the code where this gets executed.

Basically applying the displacement node.

Any hints to a good approach would be welcome.

Thanks in advanced

I know of a similar open task for it, nobody has (yet) decided to work on it though.

https://developer.blender.org/T54656

Again, I would like to only do this: Convert the vector displacement output which we get in the viewport rendering into a 3d mesh, no more no less,

with a click of a button: convert to mesh

The best way to do this is to bake the displacement to a texture - you’ll need to normalize it to 0-1 (it’s probably not normalized, and almost certainly contains some negative values, so you’ll probably have to use a vector normalize node, then add 1 to the vector and then scale the vector by 0.5).

Once you have baked out a texture, you can just use the displacement modifer. You’ll need to adjust the midlevel and strength of the modifier.

You might be able to get raw data without dealing with the math if you use a .exr image, but I wouldn’t bet on it.

Thanks for the answers so far.

I don´t think it´s possible to bake a texture, when the vector displacement has some overlapping geometry for example, so I don´t want to bake it.

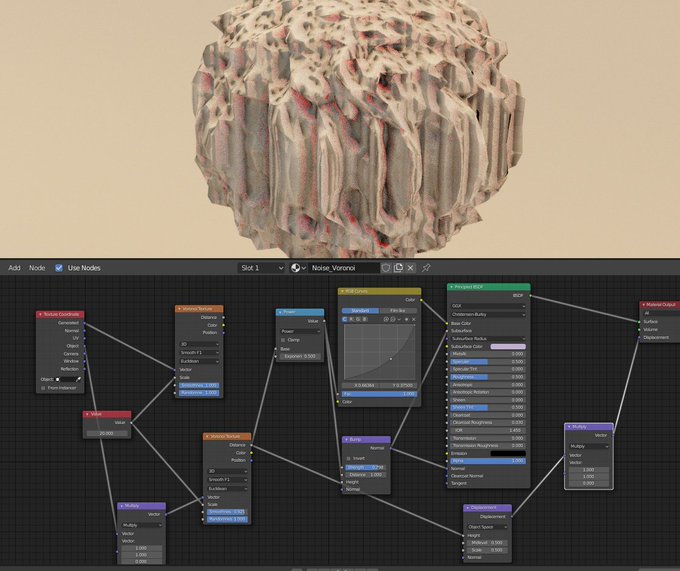

The picture is just an example for general vector displacement.

I want to convert the vector displacement to a mesh (vertices,edges,faces).

Which APIs I need and where can I find the code where this is happening in the viewport, because it is rendering the vector displacement in the viewport.

Would this be possible with Python or do I need to write it in C.

How would be the best approach?

Your best bet is to bake the texture. That is what cycles is doing in the background. I think it even uses the same basic code design as the displacement modifier.

There is no API access to this.

If you need to bake to an object that isn’t UV’d, take a look at the script I wrote for this purpose: Using Python to bake displacement for Cycles— How does Cycles displace a point?

2 Likes

Ah ok, thanks for the link.

What color-space you are using?

The link in the 4th comment results in an 404error.

Did you make any progress on that?

I mostly only did some research on it, I wrote about it in another thread. Basically, I know what to do for the simple case. but no time to actually do it.

Color space should be linear for vector images.

The link is irrelevant, what you want is the code in the first post. IIRC my code was %100 right at that point, and I just needed to make some adjustments to the Cycles material to make it work correctly.

Also, I feel kind of stupid – I wrote the script to bake sculpted displacement… I don’t actually think it will help you now that I remember what it is!

Good to know,

thanks for your time anyway.

Hi! For it to work correctly you’ll need to bake 3 maps:

-Positions for displaced geometry (geometry positions connected to output surface will do). Bake the emit.

-Positions for base geometry (same thing but nothing connected to output displacement). Bake the emit.

You should bake both with the same subdivision level.

-Result positions, by subtracting the low res from the high res with a vector math subtract. Bake the emit.

Apply a Displace modifier and set the result positions as the texture, change the coordinates to UV, the UV map if needed, Direction to ‘RGB to XYZ’, leave strength at 1 and set midlevel to 0.

This should match with the render displacement quite accurate, depending on the subdiv level.

Hope this helps.

Cheers!

2 Likes