Here I’ll post weekly reports on my project.

Further links:

5 Likes

Preparations

I had to do quite some preparations before the actual coding period started:

- Because of failing Windows updates I wasn’t able install the Windows OpenXR development preview runtime that I wanted to use as main testing runtime. So after my proposal got accepted, I did a fresh Windows 10 install.

- With that done, I was able to test the examples from the OpenXR SDK and properly investigate its code.

- During that I noticed an issue for the GSoC project: The Windows OpenXR runtime only supports DirectX, no OpenGL. Support for this runtime is important as it enables use of some common devices. And it’s the runtime I wanted to test on (the FOSS Monado doesn’t have important features like positional tracking - yet!).

Some workarounds should be possible, but of course performance is a concern. Luckily there is a GPU extension (NV_DX_interop) to share resources between OpenGL and DirectX that seems to be available on common recent hardware. I didn’t manage to do any tests with it, so I don’t know how stable it is nowadays.

- Because of this issue, I spent some time getting into DirectX. Looking at the Blender code it shouldn’t be too hard to get a DirectX context to work in a separate window. Using the extension we should then be able to use the offscreen texture of the OpenGL rendered viewport and draw that using DirectX.

-

Updated the project proposal:

- Removed the goals relating to Add-on based XR-UI support. It was agreed with my mentor (and other developers) that focus should be put on getting stable and well-performing VR support instead.

- Updated the project schedule for the new focus and added time to work on the DirectX interoperability.

- Added a section describing the role of OpenXR runtimes.

6 Likes

Week 1 (May 27 - June 1):

To use OpenXR, mechanisms to dynamically link to the OpenXR runtime need to be set up. The best way to do this is via an OpenXR loader. Focus for this week was to get OpenXR up and running via the loader provided by the OpenXR SDK.

- Added required parts of the OpenXR SDK to the Blender source so that the branch can be built without setting up any additional dependencies. This was a bit of a fight, but gave me a nice introduction to the loader’s design. Works on both Windows & Linux (06d52afcda, e65ba62c37)

- Based on this experience, I don’t recommend using this approach for a master integration. It smells like a maintenance nightmare. So I added an alternative version to compile with the OpenXR loader, which attempts to use a system installed SDK. It can be enabled with a CMake flag. (864abbd425)

I checked on this with other developers here: GSoC 2019: Core Support of Virtual Reality Headsets through OpenXR.

- Started working on the window-manager XR-API. It currently attempts to create, store and destroy an OpenXR instance (41e2f2e75f). Such an instance is used to manage the lifetime of the OpenXR runtime connection. On Windows 10, I can succesfully connect to the Windows Mixed Reality OpenXR Runtime (development preview) by now.

- Added basic functionality to query OpenXR extensions and additional API layers. We don’t use that yet, but will soon, as extensions are used to manage graphics library support. (5d5ad5d6dd)

- Tried to compile the Monado runtime on Linux but had to update my distro to a not yet released version (Debian Buster) - Monado requires this unfortunately. Still had errors compiling Monado, but didn’t really look into them yet.

Also, spending a few great days at the Libre Graphics Meeting. Bit of an eye opening experience: They all seem to look up to Blender. Very motivating

8 Likes

Heads up: I’m on a camping trip over the weekend. Brought my laptop with me but after mentioned OS upgrade my network config is messed up. So I didn’t manage to add a hotspot connection like I planned. I have some changes to be pushed which are part of last week’s work. Once I’m back on Monday I’ll push them and write a proper report. I also chatted with Dalai (as my mentor) on the progress and issues I’m facing. Nothing worrisome, I expected such problems.

Sorry about the delay of course, these are unusual circumstances.

3 Likes

Week 2 (June 2 - June 8):

Focus for the week was working on VR session management, just like planned. This should work as expected now, although testing was difficult for a number of reasons:

- OpenXR sessions depend on low level graphics context which isn’t set up yet.

- For the Windows Mixed Reality OpenXR runtime, DirectX drawing is required (planned for next week).

- Getting the Monado OpenXR runtime to compile and run was problematic:

- Requires unreleased version of Debian which broke my dev machines/environment quite a bit.

- A bug in the OpenXR SDK 0.9 release broke OpenGL usage.

- Getting all dependencies installed and usable was troublesome.

- Launching the SDK’s test apps crashes in Vulkan code for me (Monado uses Vulkan for compositing).

- Monado/OpenHMD requires manual changes in udev rules depending on used HMD device.

- …

No major issues, but annoyances that made testing hard and took time away from coding… Well, it’s bleeding edge software and I expected such hiccups.

I still got enough done to be totally in schedule:

- Session creation and destruction (35c9e3beed6)

- Basic handling of session events (e.g. session ready event, session pause event). (0d1a22f74fc, 5e642bfea1)

- Managing of OpenXR graphics bindings through runtime extensions - required for correct OpenXR session creation. (20a1af936d, eb0424c946)

So VR sessions are there now, time to jump into rendering!

Next week

Next week is going to be interesting since I’m going to spend time on DirectX compatibility. As outlined in the schedule, this is an important feature but it’s quite a bit of an unknown.

Goal is being able to draw the OpenGL viewport into a DirectX window with minimal overhead.

8 Likes

Week 3 (June 9 - June 14):

Worked on DirectX compatibility as per schedule. In short, reason for this is to support the rather important Windows Mixed Reality OpenXR runtime.

- Allow DirectX GHOST context creation (1404edbe3a).

- Support DirectX windows and open one when starting the VR session (fc8127d070, 34fa0f8ac6).

May not necessarily stay that way, it’s mostly for testing. Maybe useful to keep for users too though.

- Retarget OpenGL drawing to a window offscreen context when drawing a DirectX window (16366724bd).

- Refactored graphics binding logic for better resource management and more flexible & simple code (796994d222).

This may very well be the first Blender window drawing with DirectX  :

:

Unfortunatly, I didn’t get the last step done yet: Sharing the OpenGL offscreen buffer with DirectX to display with the latter - using an WGL extension that is. I got something working but it’s very glitchy. More due to my insufficient knowledge on OpenGL framebuffer management than to the extension I guess though.

Next Week

Finally, it’s time to plug in the HMD and start pushing pixels to it! The next two weeks should be mostly about getting the viewport rendered in the HMD. First, setting up OpenXR swapchains to get framebuffers displayed on the HMD. Then digging into the draw manager to make it render based on HMD input. There’s more to it, e.g. managing spaces and frame syncing, but those are the main points.

Of course, I’m going to work on the mentioned final step for DirectX interoperability too.

I’ve also decided to port the OpenXR access layer to GHOST. That means basically porting the contents of windowmanager/xr/ to C++ GHOST. Couple of reasons for this:

- OpenXR rendering requires low level OS/graphics-lib specific data that we currently can’t acces in C. It should preferably stay in GHOST. E.g. see

XrGraphicsBindingOpenGLXlibKHR

- C++ seems much more suited for what I’m doing.

std::vector for dynamic extension array building rather than messy raw-array violence; state management can be improved through objects; RAII for aborting sessions safely in case of errors; etc.

- Considering the level OpenXR operates on (XR-event polling, graphics bindings, dynamic linking to platform specific runtimes, etc.), keeping it in GHOST seems reasonable.

We may still want to keep a small higher-level API wrapped around GHOST_Xr calls.

I will work on this first as it will make some further work much easier.

9 Likes

Week 4 (June 15 - June 21):

Exam phase started and hit me hard this week, so very stressful days here. I didn’t get as much done as I hoped to, but again enough to stay in schedule.

-

Main achievement: Finishing the DirectX compatibility layer (637b803b97, 71c7d61808). This is a Blender DirectX Window rendering to an offscreen OpenGL context, and then sharing the result with DirectX for presenting:

What this allows us is to target the Windows Mixed Reality platform, which includes one of the currently two available OpenXR runtimes - the much more advanced one. It is also the platform I use for most testing.

The implementation uses shared resources and GPU side framebuffer blitting. That should be as fast as it can get. I expect virtually no performance overhead. It’s made possible with a WGL extension (WGL_NV_DX_interop) which, although rarely used, should be available on most modern-ish hardware.

-

Move OpenXR access API from WM to GHOST/C++ (f30fcd6c85, 4eeb7523e9, d15d07c4f6). Reasons outlined in previous report.

-

Pass low level graphics library data (GLX, WGL, D3D) to OpenXR graphics bindings (9d29373b06, b18f5a6a81, 2ee9c60c20)

-

Use lazy initialization/creation of OpenXR instances (a8519dbac2). Avoids costs of OS wide XR configuration reading and dynamic linking on startup. Also, don’t pay for what you don’t use!

Next Week

Continue working on rendering: Set up OpenXR swapchains, query and use spaces from OpenXR, frame timing, etc. Hopefully within one or two weeks I’ll see a viewport render in my HMD.

One question I didn’t think of earlier: When we do the entire VR view rendering offscreen, who owns the viewport region? Not tying this to the usual wmWindowManager->wmWindow->WorkSpace->bScreen chain would open a can of worms. Maybe introduce a virtual window type? Interesting to look into, but not a hard target for the week.

Exams/deadlines will keep me busy for two more weeks. I’m still trying/intending to get the expected 30h per week done though.

8 Likes

Week 5 (June 22 - June 30) - 1st Milestone reached

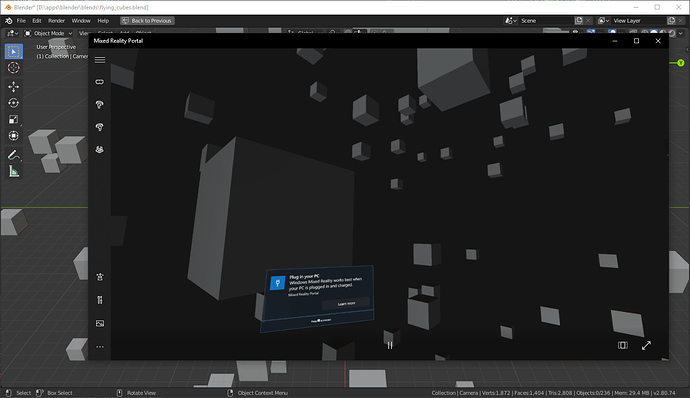

Here we go, Blender rendering a viewport to a (in this case simulated) HMD screen!:

Screenshot shows WMR device simulation, but it works with a real device too! And it’s awesome! Tweet with proper screencast will follow

. The screenshot also shows a nice benefit from using official runtimes: They can extend the experience as useful. E.g. here Windows inserts a notification into the VR scene.

To my delight, performance seems quite fine for reasonably complex scenes. Further speedups are certainly possible, but it’s a nice start. This basically means the minimum viable product is reached, as defined in my project proposal.

Once again, this has been a fight. Patch needs some cleanup and isn’t committed yet. Note that this uses the DirectX compatibility layer. OpenGL only drawing will need a few more tweaks to work.

Changes done to get there:

- OpenXR swapchain and swapchain images creation (4cfc3caa09, d749e8a2c4)

- OpenXR frame timing, views, spaces and compositing layers management (8a676e8fa9, 6b43c82cb5, 867007d9d7)

- Added

wmSurface type and API, as a container to manage non-window drawable surfaces (cf4799e299). Manages OpenGL and GPU context and allows drawing an offscreen viewport outside of the normal window drawing. Means we don’t need to set up the wmWindow → WorkSpace → bScreen → ScrArea → ARegion chain just to draw a viewport.

- Support purely offscreen rendered VR Sessions (no separate Blender VR session window required anymore - 231dbd53bf).

- Draw an offscreen viewport using the new

wmSurface type (109be29e42)

Also:

Next Week

As per schedule: Add various debugging/validation tools. This is really needed to make further work easier. I’d also like to work on good error handling (e.g. fail with good user message when no compatible OpenXR runtime is found).

Besides that, rendering needs some polish and OpenGL only drawing needs to be finished.

16 Likes

Week 6 (June 31 - July 7)

Due to a bigger assignment deadline on Friday, I didn’t manage to do much over the week. Tried to catch up a bit over the weekend, but outcome is small because of yet some more annoying mysteries I had to solve. On the positive side, the worst part of my exam phase is over now. So starting this week I should have more time and brainpower to spend on this project. I’ll also try to publish all further weekly reports on Fridays.

So there are only few things I’ve done:

- Use camera position/rotation as base pose for VR sessions (084a33ca94). So with a camera set up, the session starts in a sane perspective now.

- End sessions properly, no matter if done through Blender or the runtime (146ef0dd1e). Fixes the issue of sessions crashing on second start.

- Refactor session code to use RAII/deterministic-destruction for all resources (6904ab10f4). That means that soon, whenever an error occours, the session can end gracefully without leaking resources or affecting the rest of Blender. Note that we’re still missing OpenXR error handling to trigger graceful destruction where needed. So OpenXR errors still lead to undefined behavior.

I’m still planning to do a nicer demo of the current state of things, for now, you can check the following.

Regular VR session using Windows Mixed Reality OpenXR runtime:

WMR portal switching from own session to Blender session:

Both videos use headset simulation. Didn’t find a nicer way to screen capture this yet. Lighitng is still too dark.

Next week

Continue work from this week (stability, debugging/validation tools, rendering polish). This was planned to take two weeks, so I’m still in schedule.

Worth mentioning that the reason to work on this stuff now is to prepare the project for inital user testing. Users would have a hard time testing if Blender crashed every few clicks. Also, I need ways to debug issues they find.

9 Likes

Week 7 (July 8 - July 12)

Worked on stability, debugging and validation tools, as well as some rendering refinements. More concretly:

-

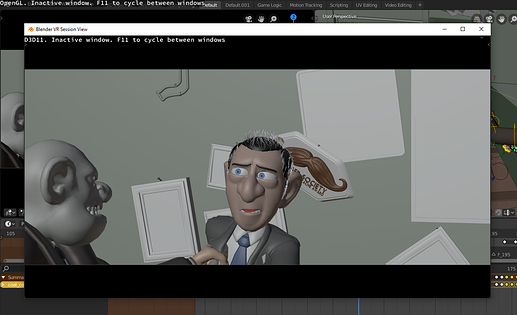

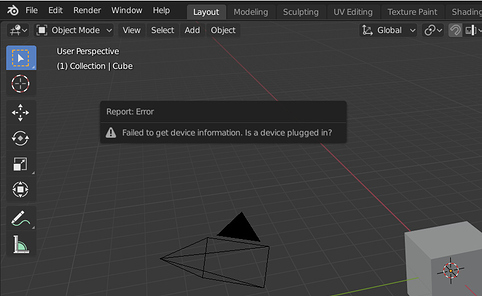

Errors in OpenXR or GHOST_Xr code will now trigger specific error message popups and cause the VR session to be canceled cleanly (330f062099, 3a2e459f58).

For example this is the error shown if we successfully connected to a OpenXR runtime, but no HMD is found:

-

Refactored GHOST_XrContext code for consistency and good error handling (c1e9cf00ac).

-

Check if GPU driver meets requirements of the OpenXR runtime (67d6399da6, 09872df1c7). If not, fail with specific error message.

-

Allow enabling the OpenXR API validation layer (b98896ddd9).

Requires some set up steps to run. Needs more work to be usable out of the box.

-

Introduce --debug-xr command line option for verbose debug prints (b216690505, 6e3158cb00).

-

Enable and use the OpenXR debug extension for --debug-xr (84d609f67b).

It allows creating a messenger through which OpenXR can submit error messages to us.

Although Windows Mixed Reality lists the extension as available, it’s apparently incomplete and causes OpenXR instance creation to fail. Disabled it on Windows for now (99560d9657).

Next week

The branch should now be mostly ready for some initial user testing. I’m going to write a page with instructions. However, I’m afraid only the Windows Mixed Reality runtime can be used for testing. Monado is complicated to get running and appears rather unstable.

So with this user feedback, I’m going to continue ironing out bugs and make the branch rock stable.

I still need to address some further things:

- Rendering is still to dark (still don’t know what causes this; hard to debug)

- I wasn’t able to test OpenGL rendering - the Monado runtime still doesn’t want to run for me.

- The OpenXR API validation layer doesn’t work out of the box (environment variables have to be set).

8 Likes

Week 8 (July 13- July 19)

Seems like I’ll have to open another report with excuses… I made the huge mistake of thinking I could take a break from exam preparations last week, so this week things struck back: Finishing up an assignment took much more time than expected, leaving me with very little time to prepare me for an exam today. Needless to say, I had to focus on that and neglect GSoC.

I still am in schedule, and I can probably be satisfied with the overall project progress. But I still thought by now I could focus more on the project, less on studies.

Here are the bits I got done regardeless:

- Wrote a guide for users interested in testing: User:Severin/GSoC-2019/How to Test - Blender Developer Wiki. Will spread this a bit more to get some early feedback. Thanks to @LazyDodo, Oculus devices can be tested as well now!

- Opened task to track ToDo’s for the project (T67083). These will probably keep me busy for a while.

- A bunch of stability fixes (crashes, memory leaks, further improved resource management).

Note that you’ll also see me doing some commits to the filebrowser_redesign branch the next days. I’m actually doing this as part of another university assignment. My teacher was very interested in my Blender work, so I managed to squeeze some in

Next week

Same as last week, as per schedule.

4 Likes

Week 9 (July 20 - July 28)

Okay here we go… I’m done with this semester and can fully commit myself to GSoC - finally! I waited with publishing this report until I crushed some issues with threaded drawing, which I started working on on Friday, but didn’t want to work at all (it does now).

Focus was a) solving performance issues and b) fixing most important issues that block user testing. I’ve also added perfomance debugging utilities.

In this week alone, we went from ~20 FPS to ~43 FPS in my benchmarks with the Classroom scene  That’s a rather fine performance for moderately complex scenes. @LazyDodo reported even bigger improvements IIRC.

That’s a rather fine performance for moderately complex scenes. @LazyDodo reported even bigger improvements IIRC.

- Initial work to do all VR viewport drawing on its own thread (b961b3f0c9).

This is far from usable so I’ve moved it to a separate branch, temp-vr-draw-thread. Performance tests above don’t include this.

- Avoid most OpenGL context switches, greatly improving performance (aff49f607a).

- Don’t recreate

GPU_offscreen and GPU_viewport on every redraw, greatly improving performance and fixing a troublesome memory leak (this relates to previous point in that it would add more context switches - 55362436d9).

- Fixed entirely broken HMD tracking (fc31be5ab0) and lighting that rotates with the view (091cc94379)

- Support OpenGL-only rendering (3a4034a741, c1d164070b).

- Add

--debug-xr-time command line option to print VR session frame render times and FPS info to console (d58eb8d29d, 57b77cd193).

- Spent hours debugging the too dark viewport with the Windows Mixed Reality runtime, but it does seem like a color management issue. I have a potential workaround, but that’ll probably break rendering for other runtimes. Once the Oculus runtime works again, we can check.

Non-GSoC Work

- As part of my final university assignment for this semester, I worked on the File Browser in the

filebrowser_redesign branch, together with a university colleague and William Reynish. See T62971. It’s close to being ready for a 2.81 merge.

- Fixed bug causing invalid

Area.ui_type during area change (D5325).

- Reviewed D5273.

In Related News

OpenXR 1.0 got released yesterday! The Oculus support is also announced for this week.

Hence, I’ll update our OpenXR loader to version 1.0 and update my How to Test Page.

Next Week

At this point I’m going to digress from my schedule. The final weeks were kinda fillers anyway, there’s more important stuff to do now.

- Finish work on the separate drawing thread (

temp-vr-draw-thread).

- Possibly: Work on the remaining bits to make the draw manager entirely concurrent (meaning: support true parallel CPU execution). Will check with other devs first.

- Update OpenXR loader to version 1.0.

- Add validation layer source files to Blender.

8 Likes

Week 10 (July 29 - August 2)

Short week, I did what I planned to do:

- Continued work for VR rendering on a separate thread (

temp-vr-draw-thread branch):

- Keep single context alive on the thread (7a711e133c). Should improve performance.

- Submitted patch to manage

GPU_matrix stacks per GPUContext (D5405). This is needed for thread save GPU_matrix usage.

- Fix all objects hidden (7922bd26a2)

- With the branch I now get ~54 FPS in the classroom scene (~43 FPS in

soc-2019-openxr)

- Documented a couple of further things we can do to improve performance and get the branch ready for a merge: GSoC 2019: Core Support of Virtual Reality Headsets through OpenXR.

- Updated OpenXR to version 1.0 (e8f66ff060, 22966f4d35). Quite some build system related stuff changed, so this took a bit of effort.

- Added sources for OpenXR core API validation layer (40db778de3).

- Tried to get the validation layer to work on Windows, turns out there’s a bug in the OpenXR loader breaking Windows support for API layers. See https://github.com/KhronosGroup/OpenXR-SDK-Source/issues/89.

- Checked with Monado devs on why the Monado runtime doesn’t work for me, suspicion is that it’s a Optimus limitation on Linux.

Next Week

Need to check with Dalai (who’s at Siggraph currently), if I should prepare the branch for a merge yet. I’d rather spend more time on threaded drawing. This may take a few more days to complete. Besides that, I’ve listed possible tasks for the remaining GSoC time here: GSoC 2019: Core Support of Virtual Reality Headsets through OpenXR.

5 Likes

Week 11 (August 3 - August 9)

- Created patch to make draw-manager theme usage thread safe, D5413.

- Updated patch to manage

GPU_matrix stacks per GPUContext (D5405). This is also for thread safety. Since the question of thread local storage performance was raised, I spent some time on carefullly testing this. Reported results in the patch.

- Fixed the too dark rendering on the Windows Mixed Reality runtime (3483aa57b3, 5350015d51). After lenghty discussion with Troy Sobotka, we concluded this runtime probably has a broken pixel color pipeline.

- Fixed errors on session exit, partially due to changes in OpenXR 1.0 (fe7b01a08f, bcc8f7c6be).

- Further work on stability (ba223dfac8) and to remove performance bottlenecks (62bbd2e37d).

- Started polish and cleanup work to prepare the branch for review (716c6b0c01, 873223f38d, 96a34d7f50).

- Also had a lenghty chat with my mentor @dfelinto on the GSoC project, as well as further plans on VR in Blender. I’ll soon set something up with other stakeholders to start planning the big picture for VR support.

Next Week

With my mentor I agreed on getting the branch ready for merge after GSoC. That way, we have an outcome from the project ready to be built on, even if I don’t manage to continue work on it. Hence, I’ll continue work on polish as well as a refactor of the CMake changes I did for the OpenXR SDK dependency.

I may also do some first changes to get the barebones for input & haptics with OpenXR implemented. That way we can soon experiment with VR event input (e.g. controllers and gestures) to get a more informed knowledge of what needs to be done for interactive VR experiences.

7 Likes

Week 12 (August 10 - August 17)

Mainly worked on getting the build system side of the branch ready for merging into master, as well as preparing further XR development for after GSoC.

- With my GSoC project coming to an end, it’s time to think about the “big picture” for XR in Blender. So I did just that and wrote a document that I’ve already sent to a number of stakeholders. Further discussion should happen on developer.blender.org, so I’m probably going to split the document into multiple tasks there.

- Committed patch for thread safe GPU_matrix access (e6425aa2bf).

- Linux: Add OpenXR-SDK to install_deps.sh (9e7c48575a).

- Remove bundled OpenXR-SDK sources from extern/ (0dd3f3925b).

- Prepare build system utilites for platform maintainers to include the SDK (d0177fc541).

- General fixes (8138099fa95, eb477c67a15, …).

Non-GSoC Work

We’re trying to get the file-browser redesign ready for a merge ASAP. So I worked on the remaining bits, and did many small tweaks and fixes. It’s sooo close to being ready!

Next Week

The final week! I’d like to get the branch into review in this week. The build system related stuff should be ready, I’ll have to go over the changes to see how much polish is needed.

For a merge into master, I don’t think it’s a good idea to expose the VR session toggle by default (which is the only VR related thing exposed in the regular UI right now). VR support is a big topic and much more work is needed. We’d only disappoint users if we showed a VR session toggle in this early state.

We could disable WITH_OPENXR by default, or allow enabling it via a Blender command line argument (e.g. --enable-vr). However, my prefered solution would be to do this via an Add-on that makes the early state of things clear and that is disabled by default.

There’s not much user documentation to be written, given that only a single button was added to the UI and that the VR session doesn’t allow any interaction yet. I might still put down some general information, but I’ll do that in the shape of Add-on documentation.

AFAIK I have to write a final project report though.

If there’s time left, I’d still like to play a bit with input and haptic support.

2 Likes

Week 13 (August 18 - August 23) - The final week

So that was it, the final week! And with this week passed, I’d consider my project as complete. Which in this case means: All targeted deliverables as per proposal are there, the branch seems to work with all available OpenXR runtimes, things are stable and polished for a merge. Further, the main branch (soc-2019-openxr) is submitted for review, I’ve written documentation on how to test/use the outcome and I’ve done quite some work to kickoff further and continuous development for XR experiences (see T68998).

All that is left to do for GSoC should be writing my final report and submitting my final evaluation.

What I’ve done this week:

- Submitted changes from the

soc-2019-openxr branch for review! (D5537)

Took a bit of effort to write a insightful patch description.

- Updated the How to Test page.

- Dalai created design tasks for XR development, I filled the task descriptions based on the design document I wrote last week. (T68998, T68994, T68995).

The full document is public now too: VR/XR: Big Picture Kickoff.

- Added an Add-on (Basic VR Viewer) controlling the Toggle VR Session button. Idea is to hide the button by default and to only show it if users enable the Add-on. That way we can make clear that the VR support is limited and in further development. (a21ae28f96f, 4612e79c52b)

- Refactored DirectX-OpenGL resource sharing, getting rid of hacks and improving performance significantly (fec7ac7c518, 2c77f2deac7, 20b0b36479a, e88970db82b).

- Refactored how we submit viewport renders to the OpenXR swapchain. Removes hacks again and improves performance. (31b8350b017, 4b674777328, 4a039158e56, 61014e1dd9b, 3441314e406, 7462fca7373)

- Lots of cleanup and fixes to prepare the branch for master.

6 Likes

Awesome, I hope XR support with continue

1 Like

:

: