Looking at the popularity of this tweet, node output previews are a very hot, desired item.

It’s a good idea, but almost every single other 3D software I know of has broken its teeth on it, usually because of the scale of procedural textures. When using object/world space mapping coords for procedural textures, they often become either too small or too large to display anything meaningful in the thumbnails.

The way around that would be to expose some sort of “preview size” parameter, which would define how large the surface the procedural texture is previewed on is. So you could define if the are the thumbnail displays is let’s say 50x50 centimeters or 50x50 meters, depending on scale of the scene and material you are working on.

What I am worried though is that it will be implemented in the typical Blender (wrong) way. Such option, if existing, should be stored per .blend file, because users will tend to work on scenes of varying scales.

But…

I am sure that if some Blender developer decides to do that, it will either be a user preferences option, in which case users will not be using it, because otherwise they would have to keep opening user preferences and changing it between different .blend files they work on. Or in other extreme, it would be unique per material, in which case the user would always have to adjust the setting for every new material they create, once again making it too much of a chore to make thumbnails worth using.

So bottom line is that while it’s a nice idea, the implementation is what matters, and the implementation details is where this will most likely fail, given the track record of development decisions.

I agree that scale should be taken into account.

Scale is an issue also in material preview (material property panel).

I wish an input field where the user can set the preview object (spehere, matball, splash etc…) size.

I have tested the CyclesX build and I am also very impressed, especially with the improved interactivity/update rate.

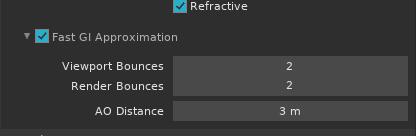

Only thing that caught me off guard is that there’s the same old UI/UX failure. The simplify bounces option was moved and renamed, which is good:

But there is absolutely no indication anywhere in the whole UI that it relies on Ambient Occlusion factor value, which is in completely different rollout in completely different properties tab (world). And nowhere in any of the Fast GI Approximation setting tooltips is there even any mention of the AO Factor value.

Why not make this its own separate thing, completely decoupled from AO?

50 posts were merged into an existing topic: Taking a look at OptiX 7.3 temporal denoising for Cycles

Is the rendering single pass related to this request thread?

For example I can’t render AO pass separately, it also render color which waste my time.

AO distance tells this is related to AO, doesn’t it?

Also each tooltip is quite self-explanatory and refers to AO.

The reason why it is not decoupled I think is that it would imply a double AO calculation in case one wants to use regular AO for rendering, or have an AO in output passes.

Yet it would be nice to be able to set an override background for it independent from the world settings.

This way you could have an HDR or whatever you like in the background and still manage strength and tint of the fast GI approximation. Tinted light is often an issue with this cool feature

No, it doesn’t. If you are a new user, you have no clue there’s an additional Ambient Occlusion rollout in the world tab, which contains the “Factor” value which is mentioned nowhere in the Fast GI Approximation UI.

You are doing the common mistake of thinking about it from the perspective of long time Blender user who has understanding of how all the different layers of nonsense cascade on top of each other and tie together, but if a new user tries to use Fast GI approximation feature, they will fail because they have no idea there’s one more external parameter, which is absolutely key to utilizing Fast GI Approximation correctly.

you mean the AO factor don’t you? you’re right, it should fit there too.

And maybe this multiplier could be easily decoupled from main AO to work only for fast GI?

On the broader topic I’m not so keen on new users accomodations. There’s a whole manual available and stuff can be easily found there, with explanations too.

I’m now in the process of learning Unreal, and in this perspective, their UI is a pure nightmare IMHO. Everyone can do better I guess

In this day and age, manual should be last resort, not the first one. The possibilities of UI these days are so vast and refined the reason to leave software to seek some external documentation should be a rare exception.

If you are learning UE4, then send me a PM. I can help you with any questions you have

As a side note for everyone, do you think we should move this discussion about the Cycles-X branch of Blender to another thread?

It seems most of the discussion isn’t directly related to the topic of this thread? Not entirely sure really.

Hi @brecht ,

is it planned to also improve pixel filtering in cycles x? Having used other renderes in and outside of blender, cycles seems to be the only one that does not filter every sample. It looks as if it only affects object edges and areas with some contrast.

Also it only has any effect with at least two samples whereas other renderers already filter with only 1 sample.

Not that that would be of much use but that already gives a much smoother/filtered look than the current pixelnoise.

Cycles is using filter importance sampling, which in the end achieves the same goal but in a much more GPU friendly way.

See

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.183.3579&rep=rep1&type=pdf

for details.

Ah! So I was looking for weighted pixel sampling vs filter importance sampling.

Would be nice to put it in the docs maybe

Makes sense now. Danke Stefan! :

I’m also hesitating. The denoising topic is starting to dominate this general Cycles Requests thread, so it’d be appreciated if you could wrap it up, or I can turn it into a dedicated thread if you want to continue the denoising discussion.

@YAFU @MetinSeven @LudvikKoutny @lsscpp @mib2berlin @SteffenD (I’m just tagging a bunch of people just so they know), I’ve created a new topic for the denoising discussion.

This is just a new thread to continue the discussion started in Cycles requests thread: Cycles Requests

Thanks, I’ll move the relevant posts to that thread.

Meeeeee I would like that scene to test it. I love neoclassical :DD

By the way, that method is so nice. I always though that fireflies can be removed by image processing because it would be easy to make an algorithm to find the sudden white pixels in 2020

I was wondering whether the Cycles architecture would for limiting the secondary effects of emissive surfaces to specific (or no) other elements in the scene. This isn’t entirely an out-of-nowhere request; there are renderers out there that permit emissive surfaces with exclusion lists, allowing for selective illumination. It allows for a number of effects that otherwise force the use of the compositor, with more awkward workflows in terms of keeping the scenes in-sync.